Custom LLM Connection

The Custom LLM node talks to any OpenAI-compatible API: Groq, Perplexity, Ollama, Azure OpenAI, or your own server. You store the provider API Key and Base URL in a Custom LLM connection.

No provider API key?

Use a Plug and Play app node from the catalog: you do not enter a provider key there (billing is PnP). For OpenAI models, see ChatGPT (Send Message to ChatGPT).

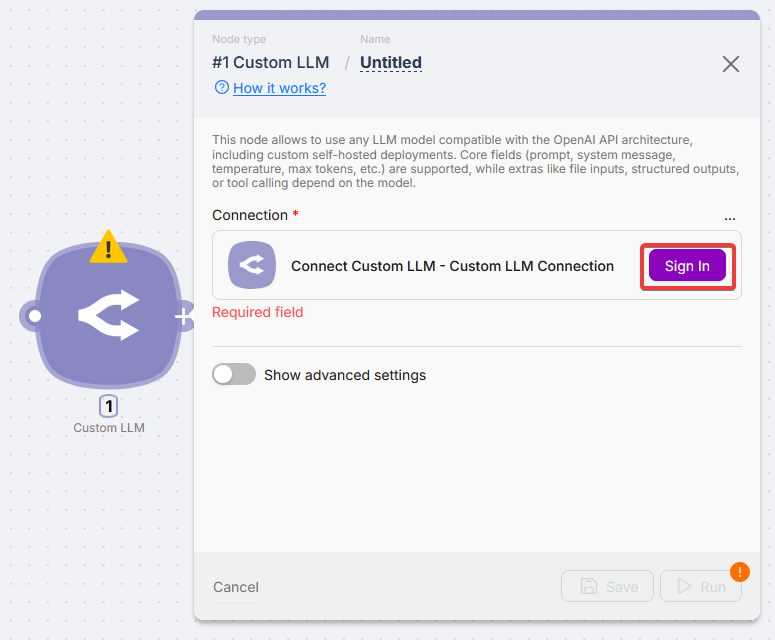

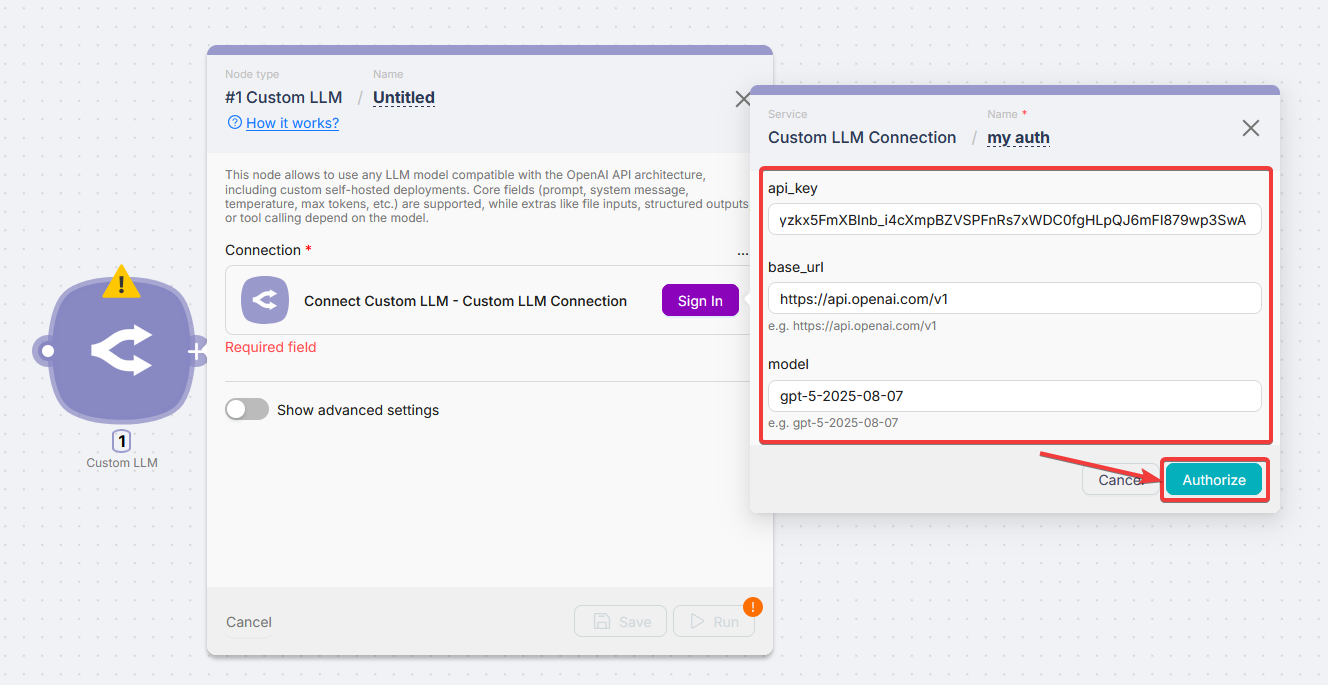

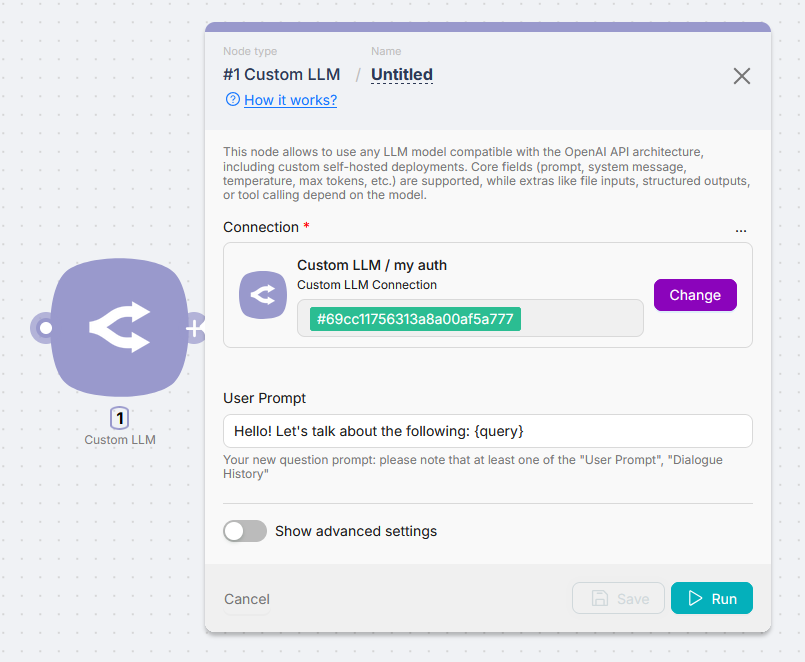

Connection setup

Before running the node, create a Custom LLM connection with provider credentials.

| Field | Description |

|---|---|

| API Key | Key from the provider |

| Base URL | OpenAI-compatible base URL |

| Model | Provider model id (e.g. llama-3.3-70b-versatile) |

Base URL examples

| Provider | Base URL |

|---|---|

| OpenAI | https://api.openai.com/v1 |

| Groq | https://api.groq.com/openai/v1 |

| Perplexity | https://api.perplexity.ai/chat/completions |

| Azure OpenAI | https://[YOUR-RESOURCE].openai.azure.com/openai/deployments/[MODEL-NAME]/chat/completions?api-version=2024-02-15 |

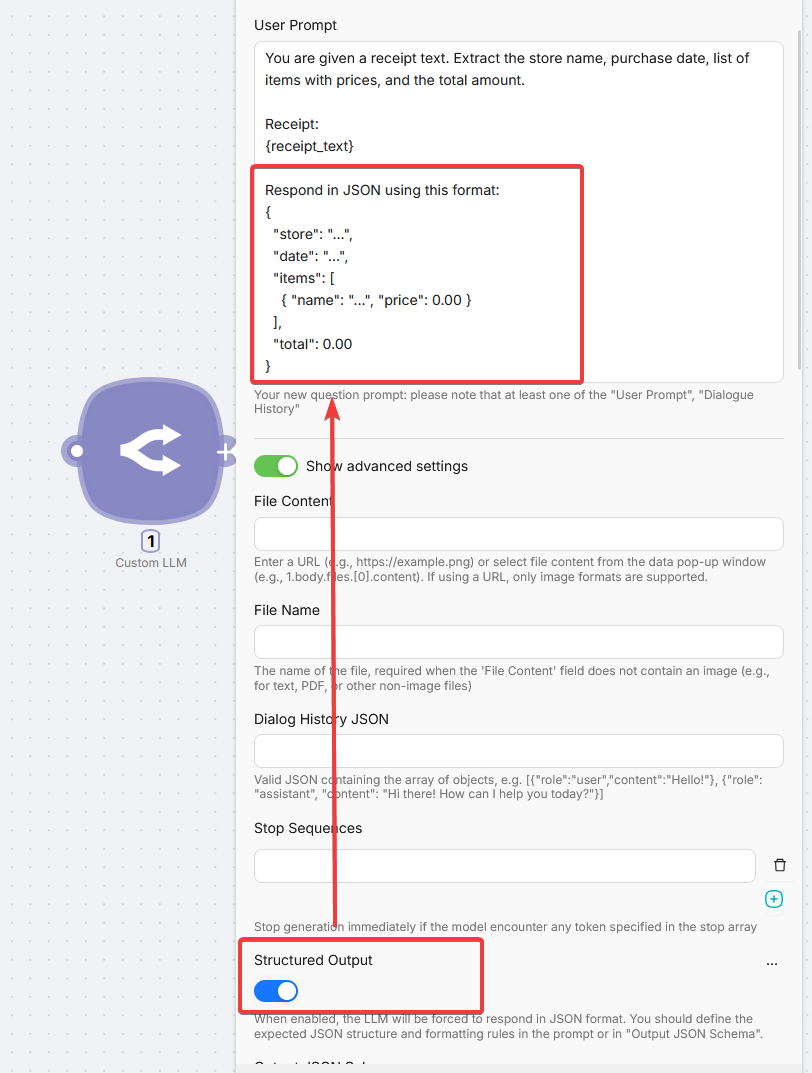

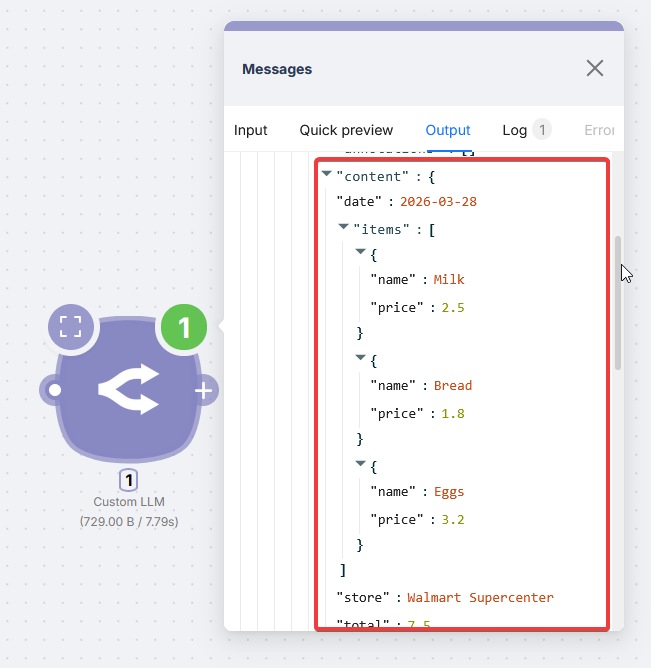

Getting structured output

Enable Structured Output and ask for JSON in the prompt when downstream nodes need structured data.

Example (receipt):

You are given a receipt text. Extract the store name, purchase date, list of items with prices, and the total amount.

Receipt:

{receipt_text}

Respond in JSON using this format:

{

"store": "...",

"date": "...",

"items": [

{ "name": "...", "price": 0.00 }

],

"total": 0.00

}

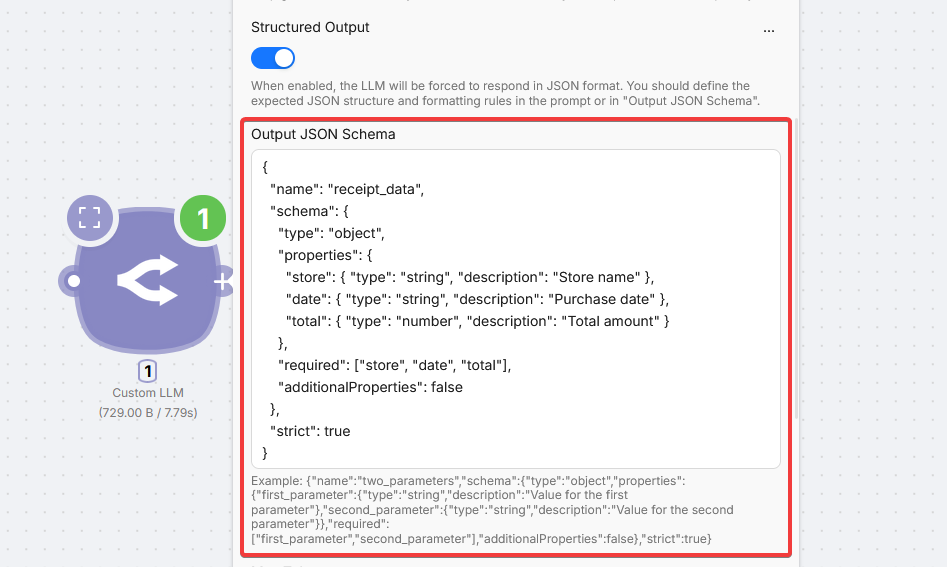

Advanced: Output JSON Schema

Support depends on the model; check the provider docs.

{

"name": "receipt_data",

"schema": {

"type": "object",

"properties": {

"store": { "type": "string", "description": "Store name" },

"date": { "type": "string", "description": "Purchase date" },

"total": { "type": "number", "description": "Total amount" }

},

"required": ["store", "date", "total"],

"additionalProperties": false

},

"strict": true

}Fields

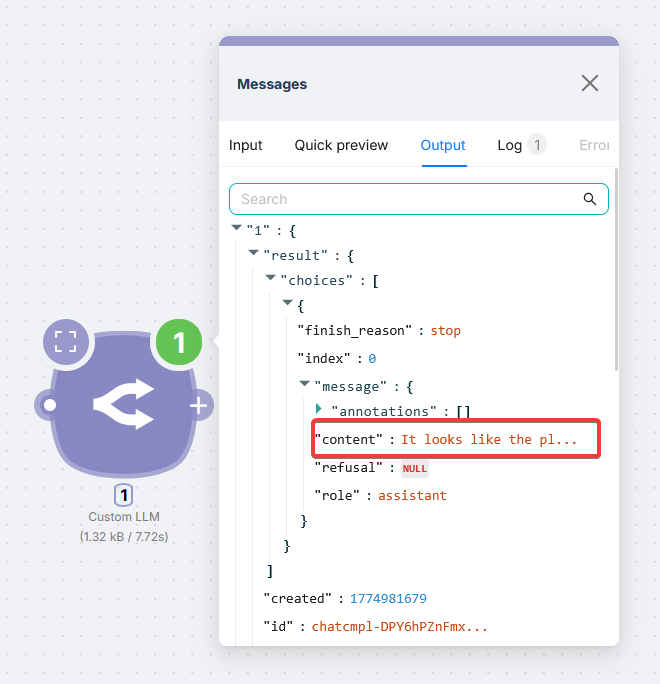

Core options (prompt, temperature, max tokens) work on compatible APIs. Files, structured output, and tools depend on the model and provider.